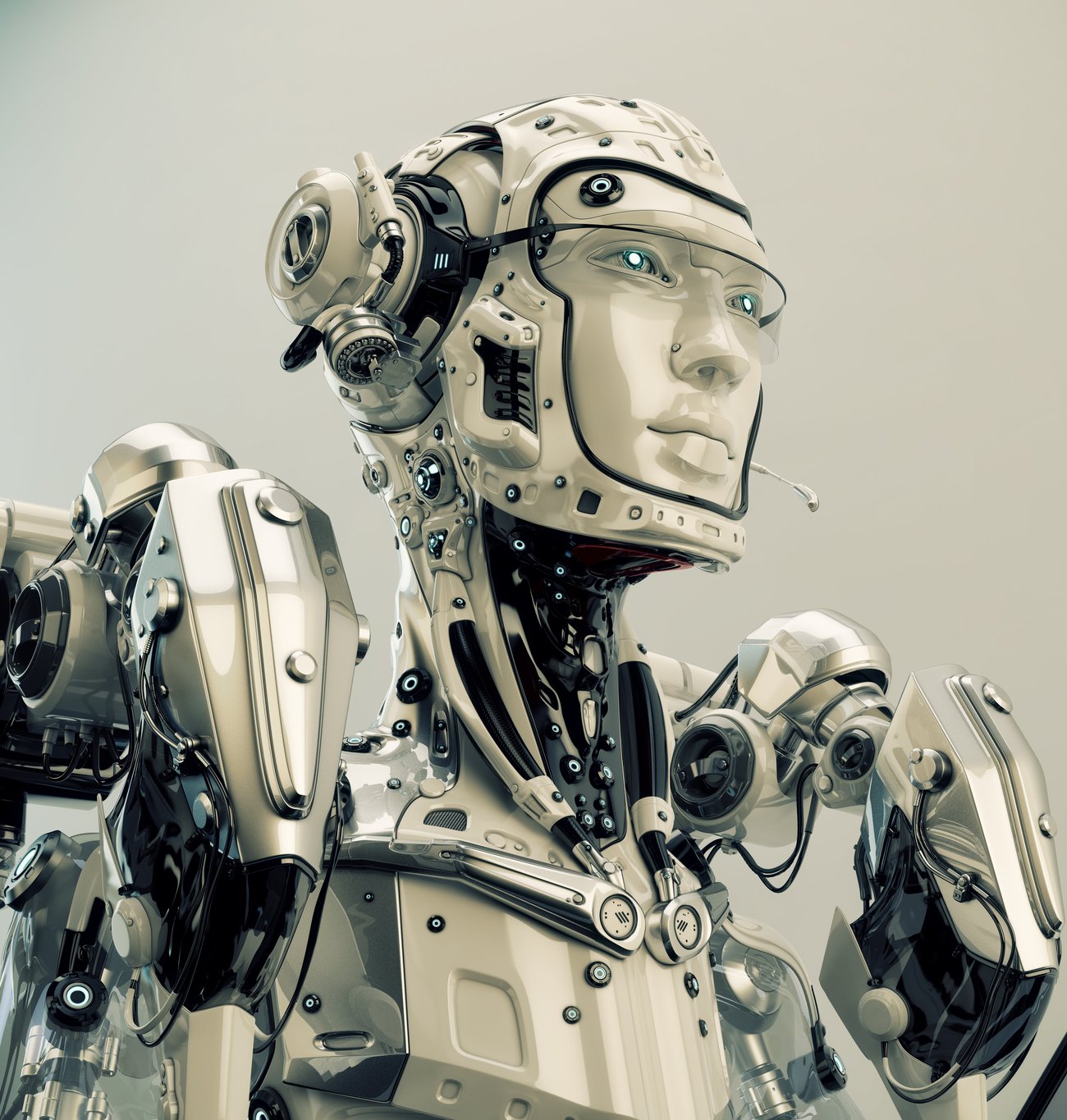

Victoria University takes on challenge of building programme to make robots “see” like humans

Ever wonder why there aren’t any robots loading dishwashers for us at home yet? That’s because robots generally have a problem seeing things the way humans do, especially in a real time, dynamic environment. How to crack this problem is what a researcher at Victoria University in Wellington will be working on.

When humans process visual information, there is a complex set of processing going on. Robots around the world today have limited intelligence when it comes to the ability to sort, for instance, clutter, or different objects in complex environment and locate what is important. If a robot can “see” and “sense” the way humans do, they would be capable of doing so much more.

“I think that the ability to ‘see’ is more than just taking in a stream of data through sensors. In order for a robot to be able to begin to ‘see’, it must be able to perceive patterns of interest within a visual scene, i.e. a scene constructed of wavelengths of light, of interest to humans,” says Dr Will Browne from Victoria University’s School of Engineering and Computer Science.

Dr Browne is supervising Syed Saud Naqvi, a PhD student from Pakistan, who is working on an algorithm to help computer programmes and robots to view static images in a way that is closer to how humans see.

Sorting by colour, shapes, textures

At the moment, different object detection algorithms exist, some focus on patterns, textures or colours while others focus on the outline of a shape. Saud’s algorithm extracts the most relevant information for decision-making by selecting the best algorithm to use on an individual image.

“The defining feature of an object is not always the same. Sometimes it’s the shape that defines it, sometimes it’s the textures or colours. A picture of a field of flowers, for example, could need a different algorithm than an image of a cardboard box,” Saud says.

Work on the algorithm was presented at this year’s Genetic and Evolutionary Computational Conference (GECCO) in Vancouver (Canada) and received a Best Paper Award.

From static to real world

The computer vision algorithm is going to be taken even further through a Victoria Summer Scholarship project to apply it to a dynamic, real-world robot for object detection tasks. This will take the algorithm from analysing static images to moving real-time scenes. The research hopes to figure how to get a robot to navigate its environment by being able to separate objects from their surrounds.

How a robot “sees”

“Our novel algorithms automatically detect important areas of interest (rather than naïvely classifying the whole scene) through learnt combinations of features and cues from previous experiences. Not only can they detect important patterns, they can vary how they detect important patterns depending on the composition of the scene. For example, in simple scenes they may look for the most homogeneous object, whereas in complex scenes with cluttered backgrounds they could search for the most different coloured object,” Dr Browne adds.

“Humans use different features and cues to perceive what is important in a scene, e.g., colour, contrast, size of object, and so forth. The biggest problem that Saud had to solve was to identify what features and cues were most important in different types of scenes for identifying areas of interest. For example, the colour red is very useful for detecting a cricket ball, but only partly useful for detecting a snooker ball, especially when the algorithm does not know anything about sport and just has the images available to it,” he notes.

To interact with the environmental intelligently, we and machines would need to “sense” the environment we are in. Teaching the “robots” how to sense an object of interest is what Saud will be working on. “We have other robots that detect distances to these obstacles/objects and have algorithms to plan what to do next, but that is a different story! The heart of this story is that we have multiple ways where we can take the senses and perceive important locations within the sensed environments.”

Applications

Dr Browne says there are a number of uses for this kind of technology both now and in the future. Immediate possibilities include use on social media and other websites to self-caption photos with information on the location or content of a photo

“Most of the robots that have been dreamed up in pop culture would need this kind of technology to work. Currently, there aren’t many home helper robots which can load a washing machine—this technology would help them do it.”

It’s early days but Dr Browne says in the future it’s possible that this kind of imaging technology could be adapted to use in medical testing, such as identifying cancer cells in a mammogram.

Google, for instance, already has algorithms that can automatically caption photographs but has yet to come up with a perfect way to describe complex photographs with multiple subjects.

Dr Browne notes: “There are many steps to be able to tag a photograph, where our algorithm considers a preliminary step. That is, what is the most important part (s) of the image to tag? This is much more compact and simple than storing loads and loads of patterns from images and then looking for commonality among them to the new image.”

More: Idealog’s previous story on Google’s auto captioning project.