Love them or hate them, killer drones are here to stay. Why they can be better than human soldiers

It is a controversial subject and one which doesn’t have a black and white answer but a renowned roboticist professor Ronald Arkin is of the view that killer robots’ actually have a positive role in the unfortunate event of a warfare.

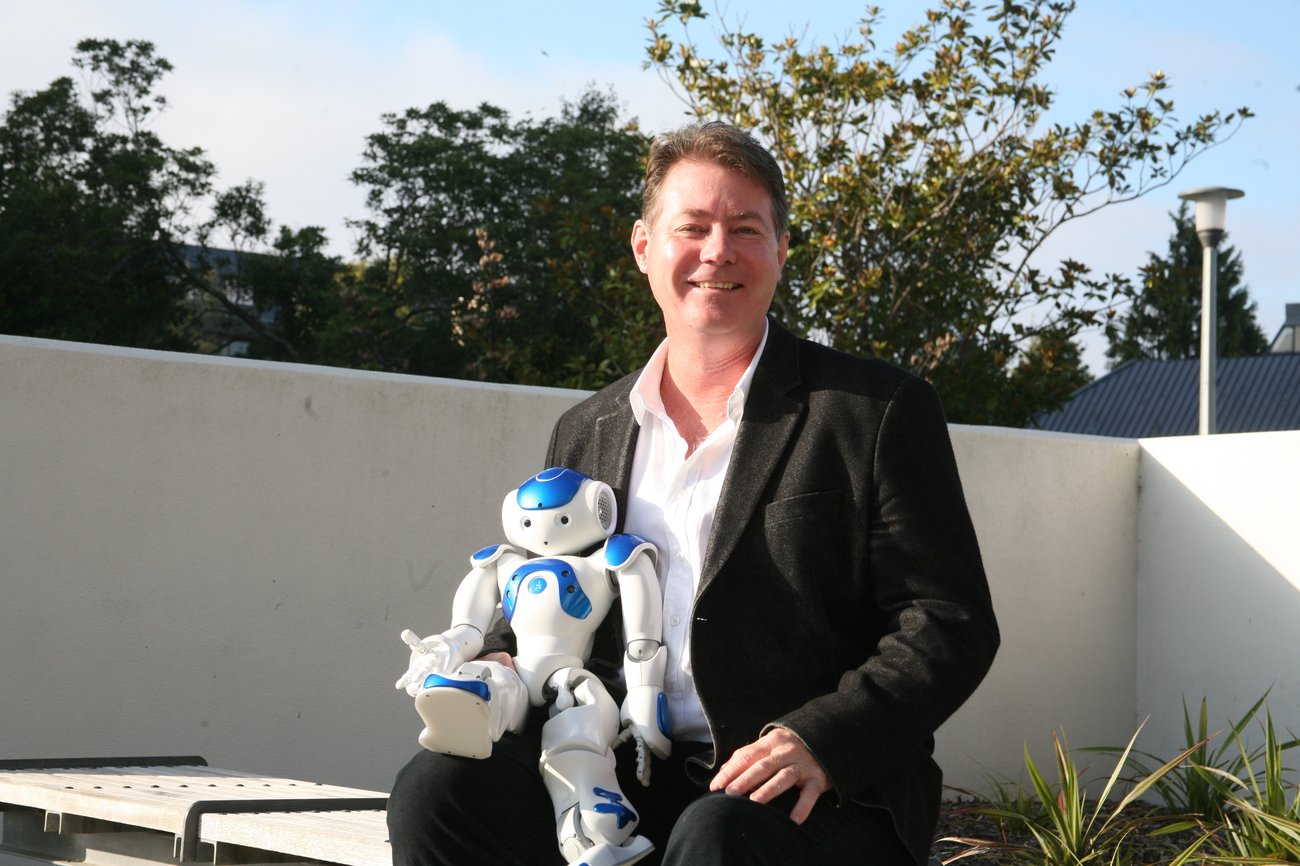

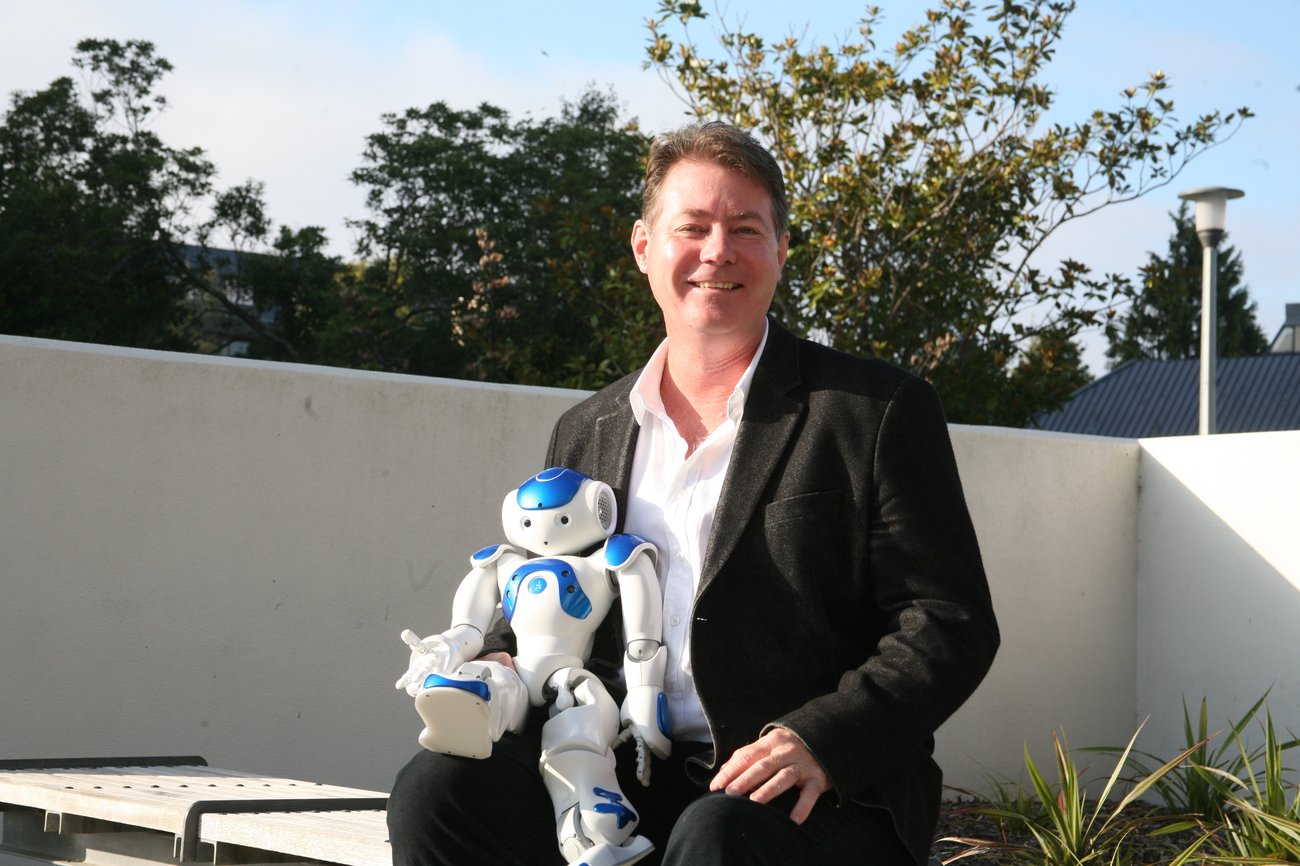

Arkin is of the view that using properly used robots used in warfare could save soldiers’ lives and ultimately reduce civilian casualties. He will be in New Zealand, and will give a public lecture on this topic at the University of Canterbury on Tuesday (March 31).

What Arkin, who is from the College of Computing at the Georgia Institute of Technology, will be arguing is that there are opportunities to get better outcomes for non-combatants (in this case human beings who are by-standers) as robots can be built to be targeted and precise, reducing potential harm in the battlefield, according to University of Canterbury’s doctoral student Sean Welsh who is researching robots and human interaction with technology.

Not sci-fi but scientific fact

Israel’s killer drones

Welsh told Idealog, although not quite Star Wars-like in the movie Attack of the Drones, the use of unmanned military drones are already prevalent in the US and other nations.

“The US Patriot Missiles already do most of the smart (actions)…We are not quite at the Star Wars stage but use of drones is a scientific fact, not science fiction,” says Welsh whose own research is into robots and their moral decision making.

He adds there are many upsides to the use of robots, and it is not all doom and gloom.

“The introduction of robots in war changes the premises of warfare and radically redefines the soldiers’ experience of going to war.

“Robots could do better than humans as war-fighters because they provide better sensors, such as seeing through walls. They have an absence of self-preservation emotions. They have an ability to re-compute scenarios in the light of fresh data and most importantly they can have a complete focus on the strictures of military duty and the rule of international humanitarian law.

“When the United States military went into Iraq in 2003, it employed only a few robotic planes, so called drones. Today, thousands of Unmanned Aerial Vehicles (UAV’s) and Unmanned Ground Vehicles (UGV’s) are being used in mainly Iraq and Afghanistan, but also in Pakistan and lately for the military intervention in Libya.

“Most robots are unarmed and used for surveillance, reconnaissance, or destruction of mines and other explosive devices. However, in the past years, there has been a dramatic increase in the use of armed robots in combat. New technology permits soldiers to kill without being in any danger, further increasing the distance from the battlefield and the enemies.

“If the use of killer robots is not properly addressed or is hastily deployed, it can lead to serious problems in the future. Rons’s talk will encourage people to think of ways to approach the issues of restraining lethal autonomous weapons systems from illegal or immoral actions in the context of international humanitarian and human rights law, whether through technology or legislation,” Welsh says.

Arkin wrote in a paper, Lethal Autonomous Systems and the Plight of the Non-combatant: “As robots are already faster, stronger, and in certain cases smarter than humans, is it really that difficult to believe they will be able to ultimately treat us more humanely in the battle- field than we do each other, given the persistent existence of atrocious behaviors by a significant subset of human warfighters?”

Bad-as humans at war

He adds there has been a persistence failure and repeated war crimes despite efforts to eliminate them through legislations and advances in training.

“Can technology help solve this problem? I believe that simply being human is the weakest point in the kill chain, i.e., our biology works against us in complying with International Humanitarian Law.

“Also the oft-repeated statement that “war is an inherently human endeavor” misses the point, as then atrocities are also an inherently human endeavor, and to eliminate them we need to perhaps look to other forms of intelligent autonomous decision-making in the conduct of war. Battlefield tempo is now outpacing the warfighter’s ability to be able to make sound rational decisions in the heat of combat.”

Arkin adds he believes robots, properly regulated and well-built, can outperform human soldiers when it comes to adhering to international humanitarians law.

NZ’s position

Welsh notes that NZ has no option but to start thinking about its position on robots used in battles or combats, being a part of the international community on war treaties and international human right conventions.

He adds that NZ has to think about its position on the deployment, for instance, of Samsung’s robots on the North Korean border, or how the use of drones in battle should regulated.

About 40 countries are currently building or developing military robots. In April this year, countries will meet at the Convention on Certain Conventional Weapons in Geneva to discuss the impact and regulations related to this.

Some of the drones in use include Raytheon’s Phalanx Close-In Weapon System (CIWS) (descried as a rapid-fire, computer-controlled, radar-guided gun system designed to destroy incoming anti-ship missiles; Israel Aerospace Industries’ Harpy and Harpy-2 missiles (described as a “fire and forget” autonomous weapon designed to destroy enemy radar stations); MBDA’s Dual Mode Brimstone anti-armor missile; and the Samsung Techwin SGR-A1 sentry gun. (Sourced from this site)

International human rights activists want the use of killer drones banned but others want more international regulation around the use of drones in warfare.